23. April 2026 By Plamen Kiradjiev

No AI Without IA

Isn’t it fascinating: you throw a few prompts into a chat and get answers to your questions—even a complete generated article. It’s become part of our daily routine—we’ve all been using AI (Use AI) for a few years now and are simply amazed at how much more accurate and detailed AI models (Large Language Models, LLM) have become over time. Another recent development is all the more fascinating—the ability to generate an entire app with just a few prompts, without having to worry about coding. Especially in recent months, with tools like Claude Code, Google’s Antigravity, and Cursor, Generative AI (GenAI) has moved beyond the early stages of Vibe Coding and is democratizing the creation of solutions without any programming knowledge (Make AI).

Logically, one might ask the following two questions:

- 1. Will search become obsolete with Use AI?

- 2. Do you need to worry about architecture with Make AI?

The first question can now be answered with certainty: this is the next level of Use AI, where intelligent search is an integral part of it, and it is the user’s responsibility to access the source information for verification and to avoid misinterpretations or hallucinations.

As for the second—Make AI—we are on a steep upward trajectory on the hype curve; every new announcement of a new LLM version causes turbulence in enterprise software and SaaS provider stocks. Let’s just recall the fallout from announcements like “Claude Code and Claude Cowork… SaaS-killer” or Claude’s ability to migrate COBOL code. Yet IBM has been offering AI-powered COBOL modernizations for years.

Therefore, in the following 8 theses, I will focus on the second question: Do we need an architecture or integration architecture (IA) today? In doing so, I will provide some examples from the manufacturing sector, where I have been working for 14 years.

Architecture connects AI with your enterprise data

All AI relies on data, whether for training generative models or for their practical application. The greatest value is realized when AI prompts and model calls are combined with traditional data structures, enterprise databases, application APIs, and existing business logic under human supervision. This involves a classic integration of distributed applications and data pools within the enterprise, enabled by a cross-enterprise integration architecture. Key components of this architecture in manufacturing are:

- Standard interfaces, ranging from OPC UA to REST APIs as foundational elements

- The Manufacturing Service Bus (MSB) with protocol adapters, mapping, routing, and orchestration

- IoT gateways that convert legacy OT protocols into standard interfaces

- Event-driven messaging and queuing

- Including non-functional aspects such as scalability, high availability, performance, security, versioning, and validation, particularly in regulatory environments such as MedTech and Pharma

An example of the successful integration of GenAI and classic integration architecture is GEC’s ONCITE Digital Production System. As a central hub for production-relevant data, ONCITE DPS integrates operational and machine data into a harmonized data model, thereby enabling unified visualization, analysis, and production control.

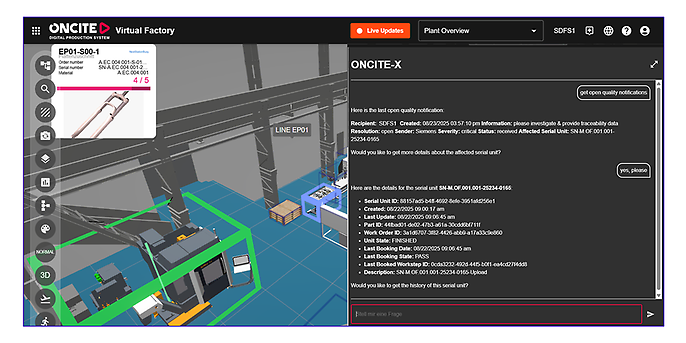

In addition to various dashboards and a 3D visualization of the shop floor, the AI component ONCITE-X enables a dialog-based query regarding the current order, quality, output, and machine statuses by using an MCP (Model Context Protocol) server to open the operational data contained in ONCITE DPS for a chat.

Figure 1: Query regarding open quality notifications in ONCITE DPS

The bidirectional integration of applications, data, and systems using a Manufacturing Service Bus as part of ONCITE DPS, along with its harmonized data model and standardized REST APIs, facilitated this AgenticAI extension and integration with native human interaction, enabling the first version of ONCITE-X to be delivered within 6 weeks.

Architecture allows the use of different data models / standards

I experienced another interesting application of GenAI in close connection with integration architecture when linking semantically similar but syntactically different data models as part of the Factory-X initiative, serving as Product Owner for the Traceability project. The initiative, sponsored by the Federal Ministry for Economic Affairs and Energy (BMWE), focuses on the secure, standardized, and sovereign exchange of data between factory equipment suppliers and operators in a data space, typically via a so-called MX port, without relinquishing data ownership or control.

The MX-Port handles authentication and authorization in the distributed data space, but it is “blind” to the payload. In the Traceability Project, one of the 11 use cases covered, our team faced the challenge of defining a traceability data model that is both standardized and flexible. We chose a minimalist approach, first identifying the most important data objects that play a role in traceability, such as order-related entries, process data (configuration data, quality test data, alarms/messages), and machine statuses with their references to products, serial numbers, batches, As-Is BOM (Bill of Materials), equipment or materials used, etc. As part of a history record, they can be made available for exchange via the data room.

For data beyond the minimal set of defined fields, we have provided a key-value-based object called Attributes. The linkage was established via foreign key IDs or timestamps. Since every MES is capable of providing the data for such a history record, the transformation can be converted into the defined format using an integration service / MSB. Using two MCP servers—one on the MX-Port receiving side and one connected to ONCITE DPS (representing any MES)—along with the corresponding tools, we demonstrated how a quality notification from a customer can be received via the data room, analyzed, and a traceability history record returned. The mappings are performed using the MCP tools with the help of the LLM employed (in this case, Gemini Flash).

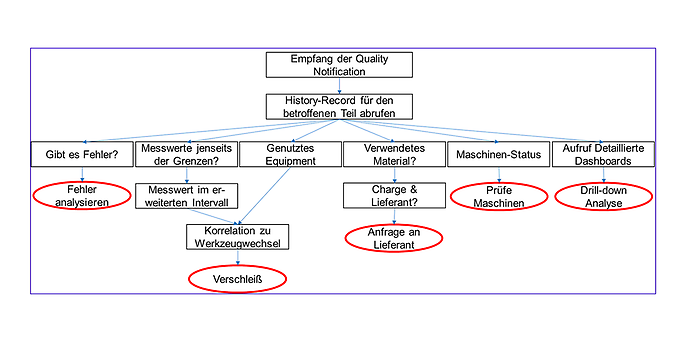

Figure 2: AI-assisted traceability analysis based on the history record – dedicated tools provide the analyses

By combining GenAI with a robust integration architecture, this example demonstrates how standard compliance can be combined with flexibility.

Architecture protects your data

With the help of MCP and the corresponding tools, corporate data can be made available to GenAI in a controlled and standardized manner. However, important data protection aspects must be taken into account here.

- Does my confidential data remain within the company?

- Could there be unintended access to the data via the MCP server?

- Is it possible to access confidential data through prompt engineering?

- Could there be unintended use of the data to train the LLM in use?

- How do I reduce hallucinations?

- Can AI agents pose a security risk to my entire intranet infrastructure?

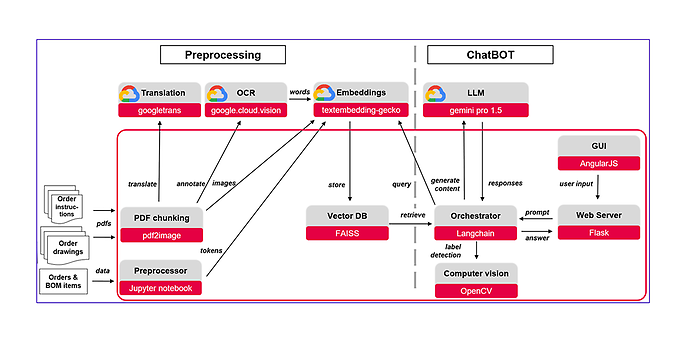

A proven method for improving accuracy and data protection is Retrieval-Augmented Generation (RAG). Documents or structured data are divided into chunks, tokenized using embedding models, and converted into vectors. These vectors, along with the chunks and annotations (for the original data mapping), are indexed in vector databases. When a search query (a prompt) is entered, it is converted into a vector via embeddings and compared with the stored vectors in the database based on semantic correlation, then ranked. Additionally, filters can be applied, for example, for specific user groups. RAG provides significantly greater control over data processing, thereby substantially reducing hallucinations.

An example of this is the AI-powered assembly assistant for use in specialized assembly at one of the plants of a well-known machine manufacturer, where RAG was applied in a pilot project to refine assembly instructions generated solely from order BOMs and customer drawings. The following diagram shows the data flow with the two preprocessing and ChatBot phases.

Figure 3: Architecture of an AI-powered assembly assistant

It should be noted that this successful pilot—which was highly sought after by operators, planners, and plant management—fell behind schedule. The reason: the delay in the digitalization initiative, which was intended to enable the continuous flow of orders, BOMs, and drawings into preprocessing. Thus, the lack of a realized integration architecture destroyed the business case for the AI solution.

Unlike LLMs, embedding models are fundamentally stateless. They have no memory and do not expand the context window, as queries are made selectively to individual chunks. A residual risk remains if responses are adjusted using an external LLM. However, 100% data protection is achievable by using self-hosted embedding models and LLMs. Another alternative is to ensure security through guarantees provided by enterprise accounts, such as those offered by Azure, AWS, or Google. With consumer and free accounts, there is no guarantee that the provider will not log, contextualize, or train on chat histories.

Architecture Increases Speed and User-Friendliness

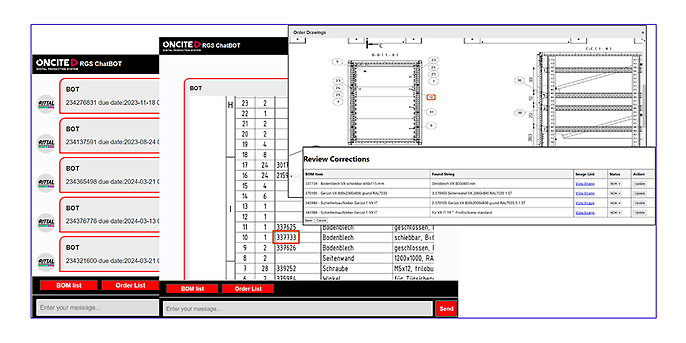

Another aspect of the AI-powered AI assistant was the specific requirements of the operators on the factory floor:

- Complex hierarchical BOM structures

- High precision is essential

- No hallucinations allowed

- No searching, no prompting

- No long wait times (for thinking)

- > 90% hands-free

- Minimal intervention: click, scroll, zoom

- Controlled power-user feedback

Therefore, the application architecture was chosen such that while the frontend resembled a chat interface, navigation was designed to be minimalist in accordance with the requirements. To reduce the wait times typical of GenAI, online usage was separated from the preprocessing batch phase and primarily focused on local content (from the vector database):

Figure 4: AI-powered assembly assistant – from the order to the BOM item and then to the highlighted drawing section

The example demonstrates a clever combination of GenAI, integration, and application architecture tailored to customer requirements.

Architecture creates confidence

“An Open-Source Chinese Model Just Topped the Coding Leaderboard… Z AI released GLM-5.1, an open-source model that scored…”

We encounter similar reports almost every week. On the one hand, models are constantly outperforming one another in terms of performance and precision; on the other hand, different models are better or worse suited for different use cases. Last but not least, a balance with costs is also necessary. Often, for data protection reasons, some use cases can only be implemented on self-hosted platforms. By using an orchestrator—a workflow automation/AI development platform—complex agent systems and software solutions can be built. In particular, they allow for switching between LLMs or using them for specific purposes.

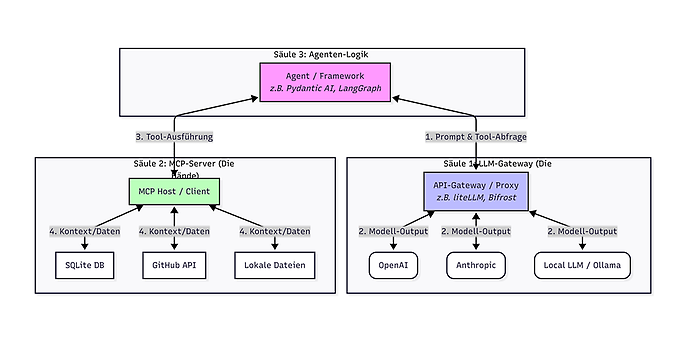

The 3-Pillar Stack

Here, too, the application’s architecture plays a role. One example is the 3-pillar stack, a modern software architecture for AI applications based on the strict separation of intelligence, functionality, and control.

The first pillar is the LLM gateway (liteLLM, Bifrost), which acts as an abstraction layer to make various language models available via a unified interface, thereby ensuring vendor independence.

The second pillar comprises the Model Context Protocol (MCP), which serves as a standardized connection layer to provide the model with external context and tools—such as database access or API integrations—in a modular manner.

The third pillar is the agent framework (Pydantic AI or LangGraph), which handles the logical orchestration and determines when which intelligence accesses which tools. This modular structure allows individual components to be swapped out or scaled without having to reimplement the entire application logic.

Here, too, it is necessary to consider, for data protection reasons, where the agent logic is implemented—locally (liteLLM, Bifrost, Portkey AI Gateway, or Ollama) or via cloud gateways such as OpenRouter or Portkey hosted—or where the LLM is deployed.

Architecture Saves Money

Well-designed and programmed applications typically feature economical, controlled use of individual API calls to LLMs. The costs for the examples mentioned above ranged between €10–20 per month. Development platforms such as GitHub Copilot, Cursor, Antigravity, or Claude Code, on the other hand, make intensive and frequent use of a predetermined number of LLMs.

The economical use of LLMs within agent-based systems is based on the consistent decoupling of computationally intensive reasoning processes from purely administrative or exploratory tasks.

Implementing a Tiered Skill Architecture ensures that high-performance models with high costs and usage quotas, such as Claude 4.6 Opus or Gemini 3.1 Pro, are reserved exclusively for complex logical problems, security analyses, or architectural questions.

The methodological approach is divided into three core areas:

- Contextual Preprocessing (Context Pruning): A high-performance, cost-efficient model (Gemini 3 Flash) serves as the primary instance. It searches through extensive data sets and generates condensed briefings. This significantly reduces the token load for the downstream high-end model, as it only needs to process the relevant “signal” rather than the entire data noise.

- Condition-Based Routing: Logical triggers are defined via a skill manifest file. Tasks involving standard boilerplate, documentation, or simple syntax corrections are automatically delegated to more efficient models. A “model upgrade” occurs only when predefined complexity thresholds are exceeded.

- Modularization through procedural skills: Routine processes are stored as hard-coded functions (skills). Instead of regenerating the planning of these processes through expensive inference steps with every call, the LLM limits itself to the targeted invocation of predefined tools. This minimizes the number of output tokens to be generated while simultaneously increasing the reliability of execution.

This granular control of model resources allows operating costs and the consumption of usage quotas to be optimized by up to 90% while maintaining consistent output quality.

Architecture combined with methodology enables control over AI

One of the biggest concerns when using AgenticAI is the risk of losing control. A recent example was a report by a security researcher at Meta about how an OpenClaw AI agent accidentally deleted her entire email inbox. It’s easy to imagine the consequences of an agent running amok on a corporate intranet. The key is to deploy these advanced technologies with full understanding and awareness. The solution is to combine a clean architecture with a methodology based on experience and best practices.

With adSCAILE (adesso Smart Cycle for AI-enhanced Lightweight Software Engineering), adesso is introducing a technology-agnostic process for scalable, agent-based software development.

Unlike reactive AI tools, the AI agents used act proactively and goal-oriented by independently planning complex tasks, breaking them down into sub-steps, and implementing them with the help of external tools. This encompasses the entire cycle from planning through code generation to testing.

The process is based on two central pillars:

- Structured Framework: adSCAILE integrates years of project expertise and best practices as a regulatory framework to ensure the efficiency of the AI agents and prevent undesirable outcomes.

- Hybrid Role Distribution: Humans act as Orchestrators, defining strategic goals and making architectural decisions. The AI handles the operational implementation in shortened development cycles of two to four days.

The goal of using adSCAILE is to significantly accelerate iterations, improve software quality, and reduce time-to-market for both new developments and the modernization of legacy systems.

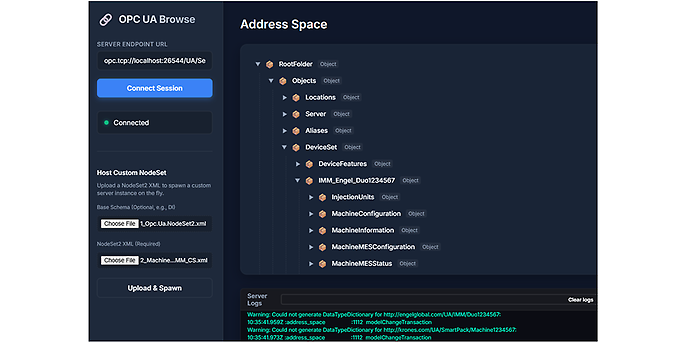

Since adSCAILE is tool-agnostic, I tested the methodology in a small trial with Antigravity. This was the third iteration of a similar use case—creating an OPC UA browse application based on a NodeSet file that defines the server’s data model according to the VDMA Companion Specifications. The reason was that I needed the ability to create an OPC UA server for simulation tests in a project. In my first attempt, shortly after the announcement of ChatGPT, the result was a code snippet that served merely as an idea for the backend. My second attempt, less than a year ago, using Vibe Coding based on GitHub Copilot, resulted in a program that, despite defined guardrails, produced increasingly convoluted code structures with each iteration.

The result of my latest attempt was a finished application that not only maintained its clean code base through multiple iterations and expansions but also enabled team-based development with my colleague using Claude Code as part of the streb framework. The development process went through all stages—from generating issues, through creating use cases, architecture, and workflows, to programming and running the created test cases.

Here is the result after a good handful of iterations:

Figure 5: An OPC UA server simulation created using the adSCAILE Agentic methodology

Thanks to the “confirm before acting” principle, it was possible to check, validate, and adjust every stage of the process. This allows for absolute control.

Architecture creates order

The democratization of software development allows everyone in the company to turn their ideas into code. Jensen Huang, CEO of Nvidia, has proposed a new compensation model in which engineers receive AI computing tokens worth about 50% of their base salary to boost productivity.

However, this low barrier to entry in Make AI also creates the perfect conditions for a chaos of applications—admittedly interesting ones—that sometimes solve the same problems and repeatedly burn through a large number of tokens within the company. From a corporate perspective, it is essential to establish order here. The solution is provided by another architectural discipline: Enterprise Architecture (EA).

In agent-based software development, EA assumes a critical control and steering function to reconcile the agility of AI agents with the requirements of IT governance. Since agents can autonomously generate code and allocate external resources, EA defines binding guidelines in the form of standardized interfaces, security requirements, and compliance policies. This governance ensures that agent-generated applications do not create technical debt or foster shadow IT structures, but are seamlessly integrated into the company’s existing infrastructure planning. Here, EA acts as a “system architect” that ensures interoperability between different agent ecosystems and institutionalizes quality assurance through automated architecture checks (Architecture-as-Code).

To fully leverage the innovation potential of this accelerated development, establishing an Enterprise App Store is recommended. This central platform serves as a curated marketplace for the applications and microservices created agentically within the company.

The concept of the Enterprise App Store provides the IT organization with assurance for centrally offered apps by verifying compliance with EA guidelines, ensuring reusability, usage and cost transparency, as well as license management and compliance with regulatory requirements (EU AI Act).

By combining strict EA governance with a low-threshold app store model, the IT organization transforms from a pure service provider into a platform provider that enables the speed of AI development to be managed securely and scalably.

Conclusion

With the help of a stable architecture that encompasses data integration, application design, and enterprise-wide usage, along with a methodology based on best practices, AI becomes a driver of productivity and acceleration, enabling the transition from pure prototyping—where 95% of pilots fail—to productive and sustainable operations. In doing so, the core principles of trust and transparency established by IBM even before GenAI and AgenticAI are upheld:

- 1. The purpose of AI is to augment human intelligence

- 2. Data and insights belong to their creators

- 3. New technologies must be transparent and explainable